Took most of this from my github repo:

Seeing GANs create fake faces from real ones made me wonder could I create fake GBA fire emblem faces from the real ones.

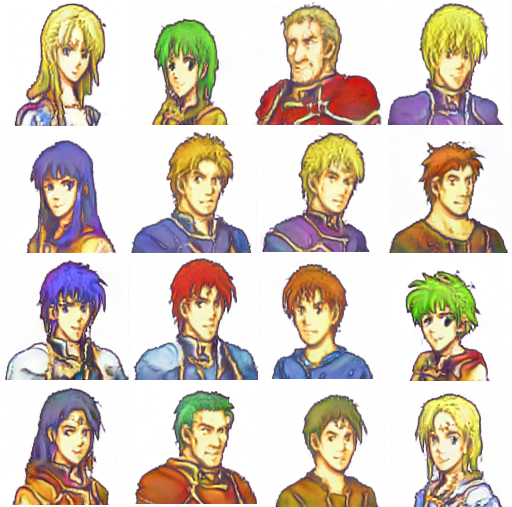

Generated Fakes Example

Generated Fakes training (from 0th kimg to 480th kimg) video on YouTube:

How do I use this?

- Have a Google account.

-

Click on this link and follow the instructions.

Alternatively, you could do it the long way and click on the fileDemo_FE_GBA_Portraits.ipynbhere on on the github repo and then press the buttonOpen in Colabwhen it shows up. If the steps are slightly confusing, check out this tutorial video. (it’s sped up to 150%).

Why is it on Google Colab?

StyleGAN2 requires CUDA enabled CUDA and I don’t have that. Plus, CUDA GPU hosting is costly ($0.9/hr on AWS AFAIK) so I’d rather have it on a free service like Colab since it works 24/7 for free unless you overuse/abuse it.

Does anything get downloaded on my computer?

No, unless you save the images. All operations happen on the virtual machine offered by Google Colab.

How many different images can it generate?

Well, theoretically, there’s 5 models and each model can generate 2^32 images, which is 5*2^32 which is 21,474,836,480. Practically there is no way of knowing this because a lot of images are similar or just too bad (helmet heads or double facing heads).

Just because how StyleGAN/StyleGAN2 works, the input and output images have to be squares with height and width in power of 2 (think 32x32, 64x64). Since portraits were 96x80, I resized them to 124x124. Hence, the output image will be of the size 128x128 so you may have to crop and resize them down. There is no guarantee that the colors will fall in the GBA range too, but many of the images can be converted to be suitable for ROM hacks with a simple resize and custom indexing of colors to 16. So yes, there needs to be some post processing involved but the generated images are in the same style as FEGBA portraits.

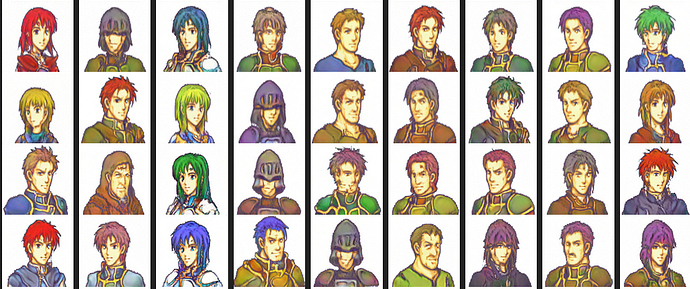

Thanks to spriters for providing the training data:

- Aegius (Zach) who gave ~30 portraits from his project (Necrosis Among the Living).

- Fire Emblem Mugs by Atey who has posted the linked portraits for use freely.

- Servants from FateGO in the GBA portrait style, Collection of Fire Emblem Fates GBA Mugs, Nohr in GBA Style by u/Toaomr on Reddit and Twitter : @toaomr

- Fire Emblem Custom mug GBA spread sheet by caringcarrot

- Free to use NICKT collection by NICKT

License

This project was purely made for educational purposes/research purposes and the code base is strictly non-commercial as it is licensed under Nvidia Source Code License-NC because of usage of StyleGAN2. You are free to use (credit appreciated but not required) this tool as long as you use it reponsibly and non-commerically but we are not responsible for any uses (read the license for more details). Do not ask us for the training dataset. We do not have the permission to redistribute the artists’ works.

References:

- Steam StyleGAN2 by woctezuma

- The original StyleGAN2 repository

- @woctezuma’s fork of StyleGAN2 for easy saving results in google drive.

StyleGAN2 citation:

@article{Karras2019stylegan2,

title = {Analyzing and Improving the Image Quality of {StyleGAN}},

author = {Tero Karras and Samuli Laine and Miika Aittala and Janne Hellsten and Jaakko Lehtinen and Timo Aila},

journal = {CoRR},

volume = {abs/1912.04958},

year = {2019},

}