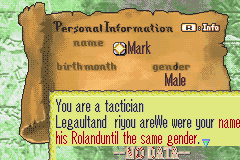

The RAM gets funky when you have four portraits and map sprites loaded at the same time. Either have a background when you have to have four portraits or just have three portraits on the map and Load/Clear them as needed.

this issue won’t happen if marth wasn’t running. if there are no active map sprites, 4 portraits can be onscreen in front of the map.

https://cdn.discordapp.com/attachments/235104303520415745/255451544390991873/breaks_after_ch2…_01.png

Great. Machis, we all know you’re bad. Can you not infect the game with your badness?

at zahlman’s request be graced by two gifs of me trying to make an item that can be Used to recover HP.

https://cdn.discordapp.com/attachments/235104303520415745/257323811030958091/nyoynoo.gif

(the first attempt at this caused the game to lock up when being used to heal so i’m relatively happy since it no longer does that)

reclass to wyvern rider soon

nailed it

(Spoilers: I fixed it a few minutes later, and explaining what went wrong would require some considerable background explanation even for other ASM wizards)

(Immediately after this was posted, Wan and Cam asked zahl for more explanations. Following, said explanation.)

so I have this super-optimized encoding for the huffman tree, and among other nice things, it supports ‘supernodes’

where it grabs several bits at once and uses them to short-circuit multiple levels down the tree

in order to make that work, conceptually I make three types of nodes: “twigs” (that just have 2^n leaves selected directly with n bits), “branches” (that select from 2^n internal nodes in the same way), and “flowers” (that have a leaf as the left child and an internal node as the right child)

I can hand-wavingly prove that I can always build the tree out of these nodes, with the algorithm that the code uses

anyway, so I had to write totally new asm to do the actual decoding, yeah

the other part of the story is that the nodes have this haxxed encoding, where it uses 4 bits to store both the node type and the node ‘size’: 0 = flower, 1-10 = twig (and its size), 11-15 = branch (size + 10)

(size is number of bits to use)

so what went wrong was, I correctly set it up to subtract 10 from the branch sizes, and use the twig sizes directly but for flowers, it still had the 0 in the "number of bits to grab from the huffman stream) register (which is also used for multiple other things at various points)

which is wrong, because it needs 1 bit from the stream to decide whether to use the leaf or the twig

it was always using the leaf.

previously detected, much less entertaining bugs:

- failure, in the code that unpacks bits from the stream, to keep track correctly of the number of bits actually used

- after getting to a leaf, an error in looking up the corresponding symbol’s offset in the symbol table (I had an index into an array of shorts, forgot to multiply it by 2, basically)

Nerd

I still have several more things planned that I could do to save even more space, but I’m shelving that in favour of, basically, the stuff I described in my FEE3 demo.

fe8 when tho

Pretty much whenever, tbh, I think I already have all the notes I need actually

but I kinda want to finish this conceptual chunk first.

(In case you’re wondering where Seth & Franz went; pressing A on them and then hitting B caused them to unexist)

Don’t write asm by hand && doubly so when sleep deprived

This was caused by uh

ORG 0x00087308

BYTE 0x08 0x80 0x46 0xC0 0x46 0xC0

ORG 0x0001CB7E

BYTE 0x46 0xC0 0x46 0xC0

ORG 0x00032E54

BYTE 0x46 0xC0 0x46 0xC0

Which is written in Big as opposed to Little Endian because I am dumb

Even worse, though, after I corrected that it STILL was broken:

However, that time it was caused because I had read the registers wrong (I did lsr r0,r0,2 when I wanted to do lsr r0,r3,2)

And now I have every unit’s Move value tending towards 10~12 on the stat screen (seriously. the Demon King gets +7 move, classes with 5 move get +6 move, classes with 9 move get +3 move etc and I can’t figure out why)

Kids, don’t use the special Nintendo tiles in normal text.

Found a cool glitch. You need to see it in action in order to get the full effect.

Put the following into your UNIT Bad:

UNIT 0xC1 0x10 0xC1 Level(7,Enemy,True) [0,0][18,8] [SteelSword, 0, 0, 0] [0x0D,0x03,0x01,0x00]

UNIT 0xC1 0x10 0x00 Level(8,Enemy,True) [0,0][18,9] [IronSword, 0, 0, 0] [0x0C,0x06,0x02,0x00]

UNIT 0xC1 0x10 0xC1 Level(6,Enemy,True) [0,0][18,10] [0x10, 0, 0, 0] [0x0D,0x03,0x01,0x00]

UNIT 0xC1 0x10 0x00 Level(8,Enemy,True) [0,0][18,6] [SilverSword, DoorKey, 0, 0] [0x0D,0x03,0x01,0x00]

UNIT 0xC1 0x10 0x00 Level(7,Enemy,True) [0,0][18,5] [0x0E, 0x0F, 0x13, 0] [0x0C,0x06,0x02,0x00]

UNIT 0xC1 0x10 0x00 Level(8,Enemy,True) [0,0][18,4] [0x12, SilverSword, 0, 0] [0x0D,0x03,0x01,0x00]

If done correctly, before the first swordmaster moves, the music will start screeching horribly(or the game will reset[I am unsure of how to control whether it progresses or resets]). If the game continues, then once a second one moves, the game will fade into a Game Over. The only difference from a normal game over, however, is not the screeching. It is the fact that ALL your save files(on the current battery) will be deleted, and you will be forced to start a new game.

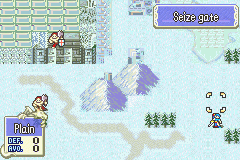

First map I’ve ever inserted! But that corner over there is off. better fix it

Yea that’ll do

i, uh, wasn’t able to replicate this. I’m gonna have to see a vid or something

Well, let’s see how those–

[Demon screech x 6]

dammit ok let’s try this again–

IT DIDN’T BREAK BUT

This flickers whenever the text boxes are cleared or characters move etc:

OR MAYBE IT’S BECAUSE THE BITMAP IS COLOR INDEXED?

[no]

AFTER INTENSIVE DEBUGGING

it was because the portrait was not word aligned.

aw you left out the best part

doesn’t look like anyone is interested in maintaining order actually